Aivero at GStreamer Conference 2019 in Lyon

GStreamer is the number one framework for multimedia handling and is used by a vast range of open and closed source projects and products. Remember the 2017 Nobel prize for proving the existence of gravitational waves? – Research powered by GStreamer. It also happens to be used on virtually every SmartTV. At Aivero we use it as one of our building blocks.

The annual GStreamer conference gathers developers from around the globe working on projects ranging from CCTV and industrial machine vision applications to smart TVs and web streaming platforms. GStreamer being an open source project it is developed by companies using it and coordinated by the team of Centricular Ltd. The conference is always followed by a GStreamer Hackfest, giving participants the opportunity to work on common or company specific GStreamer issues with the chance to exchange insights and solutions in an unofficial setting.

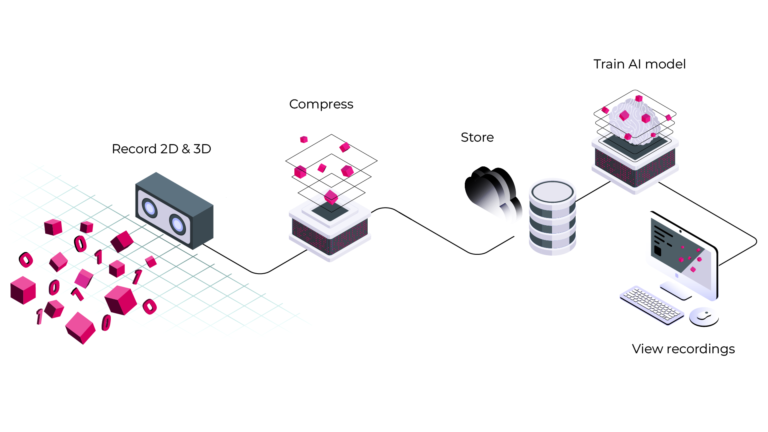

During the conference, our CTO Raphael Dürscheid, presented our recent open source work on adding support for RGB-D or depth cameras, as well as introducing our proprietary depth video compression to GStreamer.

The components we open sourced allow developers to work with all video streams coming from Intel’s Realsense cameras, as well as Microsoft’s Azure Kinect camera, soon.

We defined a simple interface that can support all types of RGB-D cameras and we encourage developers interested in working with these cameras to consider GStreamer and all the high-performing video handling it offers.

Niclas gave a lightning talk on how we are using conan.io for handling all our building and packaging of our dependencies and our GStreamer elements written in rust and C++.

We have open sourced a set of GStreamer elements designed to allow opening the video streams from RGB-D cameras. Explicitly we support the Intel RealSense D400 series cameras and are close to supporting Microsofts new Kinect for Azure.

- realsensesrc: https://gitlab.com/aivero/public/gstreamer/gst-realsense/-/releases

- K4asrc (TBD)

The source elements above produce our newly defined (open source) `video/rgbd` interface, often called a `caps type` in GStreamer. This interface gives element developers access to the frames from all enabled devices onboard the camera, taken at the same time. Certain types of post-processing will benefit from low-overhead access to this matched set of frames.

Furthermore, we released the `rgbdmux` and rgbddemux` elements, respectively muxing and demuxing our `video/rgbd` to the contained elementary streams, i.e. on the D400 series these would be `depth, infra1, infra2, colour`.

These demuxed video streams can now be used like any other video stream inside GStreamer, giving developers access to all the powerful tools GStreamer provides.

rgbdmux, rgbddemux: https://gitlab.com/aivero/public/gstreamer/gst-rgbd/-/releases#0.1.5